About this argument you can find other resources in my other three last posts,

High Availability Test Plan of a BizTalk Solution

High Availability Test Plan of a BizTalk Solution – Methodology – requirements and expectation

High Availability Test Plan of a BizTalk Solution – A sample of a real approach

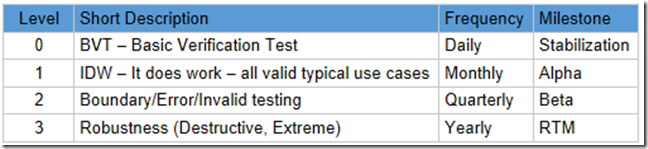

The best strategy is to manage a BizTalk solution/project as a “product”, with his milestone, we can have a table of levels with milestone

Level 0 – Basic Verification Test (also know as BVT)

The purpose of BVTs are to prove that the solution works for the most simple use cases. Passing 100% means the solution may be used for further testing.

Level 0 test cases have the following properties:

- Simple valid test case for major features of the solution

- Typically Manual (Automated if we can)

- Manual cases are run weekly

- Automated cases are run daily

- Isolated component tests with known good data

- Includes components used very frequently

- Runs on recommended platform

BVTs do not contain manual test cases

- Excludes components used infrequently

- Excludes integrated subsystem test

- Excludes atypical platform test

BVTs are used to prove that a build is good. They are used by integration teams to prove compatibility with other products. They should also be used by Developers before check-in so they don’t break the build. The suite for any given solution should run in under 1 hour, otherwise it adversely affects the check-in process. A balance needs to be struck between proving that the major core features of the solution are working and insuring that the BVT run quickly so they are easily used and run. For example if BVTs take 4-5hours to run will not be used for daily checkins by Development before check

Automation: you need to consider development cost vs. execution costs when determining if it makes since to automate

Also factor if following product bundling compatibility testing that make also take advantage of automated cases.

Example: An Level 0 purchase order test case would have a single simple line item and validate that it got processed correctly.

Level 1 – Validity Testing – (also know as IDW – It Does Work)

The purpose of L1 tests are to prove that the typical valid use cases of the solution work as intended. Passing 100% means the solution may be used for external development as well as major testing cycles for solution releases. L1 test cases have the following properties:

- Valid typical test case for all features of the solution including minor features

- Basic Integrated System Tests – test with known dependencies and related features

- Typically Manual (Automated if we can)

- RC Breadth Pass

- Basic, simple developer and user scenarios

- Includes variations of platforms that are supported

IDW’s are typically made public on a restricted distribution basis (alpha or non-production beta’s) and given to select partners as well as internal customers. It’s primary use case is to further development and get feedback from external sources. However L1’s passing are NOT sufficient to support production usage of the solution .

Scenarios differ from features in that they are designed to mimic the end to end process a customer goes through in a use case of the solution .

Scenarios may also utilize other featuress or software tools in order to accomplish the job.

Because of this – it is expensive to execute scenarios in an automated daily manner that is typical for software products. For this reason / difference we have developed the following test case level definitions for scenario test levels.

Example: A L1 purchase order test case would have 10 line items, with discounts and changes from a prior order. It would validate that it got processed correctly.

Level 2 – (Invalid/Error/Boundary Testing) Production Beta

L2 test cases prove that the solution works at the defined limits for each feature. L2’s also proves that known error conditions are handled correctly. Valid responses to invalid data or error conditions are tested.

- Extended Integrated System Tests – test with non-standard situation mix.

- Boundary Conditions

- Invalid/Error Condition

- Typical end to end scenarios – dev through deployment to production testing

- Performance, stress and scale tests that used to meet customer/solution ship criteria

L2s passing would indicate valuable and robust build. Build behaves acceptably when used incorrectly.

Example: An L2 purchase order test case would have 100 line items (product supported max # of line items), with one of every kind of discount and changes from a prior order. It would validate that it got processed correctly

Example: Another L2 purchase order test case would have 101 line items. It would validate that the system responded correctly regarding the fact that this exceeds the systems known limit for line items and rejects the order.

Level 3 – Robustness Testing (Destructive testing – Extreme testing)

Solution may still ship, even if a problem was found with this type of test, depending on the scenario. Level 3 testing is very important for understanding the true quality of the solution . Failed L3 test cases must be characterized for the customer either by KB (if not public), solution docs (readme/known issues/trouble shooting guide), or core docs for solution characterization.

- Depth/Robustness Stabilization

- Complex repro – Unlikely extreme scenarios.

- Fail and recover scenarios (denial of service – security breech..)

- Fault Tolerance

- Extreme deployment and load scenarios.

- Extreme Performance, Stress and Scalability cases used to characterize limits of the product

- Security Hacker testing

- Complex end to end scenarios – disaster recovery, over throttled runtime servers

- May include 3rd party software that customers typically also install on the same server

Example: An L3 purchase order test case would change the configuration property for max number of line items to maxint and send in a 1GB purchase order. It would validate that the system responded correctly regarding the fact that this exceeds some limit or got processed correctly.